PostgreSQL 14 Database Refresher :: Working with JSON & Node.js

PostgreSQL Setup

Docker

I am going to use the official Docker image to set up the SQL Database on a Debian Bullseye server:

docker run -d --rm \

--name postgres \

-e POSTGRES_PASSWORD=secretpassword \

-p 5432:5432 \

postgres:14

I can now access the container and connect to the Postgres CLI:

docker exec -ti -u postgres postgres psql

psql (14.0 (Debian 14.0-1.pgdg110+1))

Type "help" for help.

postgres=#

Create a Database

CREATE DATABASE books;

Switch to using the new books table instead of the default postgres:

\connect books;

You are now connected to database "books" as user "postgres".

Add a Table

CREATE TABLE the_expanse (

book_id INTEGER PRIMARY KEY GENERATED ALWAYS AS IDENTITY,

title VARCHAR ( 25 ) UNIQUE NOT NULL,

isbn VARCHAR ( 25 ) UNIQUE NOT NULL,

year INT,

pages INT,

created_on TIMESTAMP NOT NULL

);

Add Data

INSERT INTO the_expanse

(title, isbn, year, pages, created_on)

VALUES

('Leviathan Wakes', '978-0-316-12908-4', 2011, 592, NOW() - interval '1256 days'),

('Calibans War', '978-1-841-49990-1', 2012, 595, NOW() - interval '993 days'),

('Abaddons Gate', '978-0-316-12907-7', 2013, 539, NOW() - interval '765 days'),

('Cibola Burn', '978-0-316-21762-0', 2014, 583, NOW() - interval '543 days'),

('Nemesis Games', '978-0-316-21758-3', 2015, 544, NOW() - interval '267 days'),

('Babylons Ashes', '978-0-316-33474-7', 2016, 608, NOW() - interval '189 days'),

('Persepolis Rising', '978-0-316-33283-5', 2017, 560, NOW() - interval '122 days'),

('Tiamats Wrath', '978-0-316-33286-6', 2019, 544, NOW() - interval '98 days'),

('Leviathan Falls', '978-0-356-51039-2', 2021, 528, NOW() - interval '21 days');

SELECT * FROM the_expanse

;

book_id | title | isbn | year | pages | created_on

---------+-------------------+-------------------+------+-------+----------------------------

1 | Leviathan Wakes | 978-0-316-12908-4 | 2011 | 592 | 2018-05-03 08:42:05.608243

2 | Calibans War | 978-1-841-49990-1 | 2012 | 595 | 2019-01-21 08:42:05.608243

3 | Abaddons Gate | 978-0-316-12907-7 | 2013 | 539 | 2019-09-06 08:42:05.608243

4 | Cibola Burn | 978-0-316-21762-0 | 2014 | 583 | 2020-04-15 08:42:05.608243

5 | Nemesis Games | 978-0-316-21758-3 | 2015 | 544 | 2021-01-16 08:42:05.608243

6 | Babylons Ashes | 978-0-316-33474-7 | 2016 | 608 | 2021-04-04 08:42:05.608243

7 | Persepolis Rising | 978-0-316-33283-5 | 2017 | 560 | 2021-06-10 08:42:05.608243

9 | Tiamats Wrath | 978-0-316-33286-6 | 2019 | 544 | 2021-07-04 08:44:16.827956

10 | Leviathan Falls | 978-0-356-51039-2 | 2021 | 528 | 2021-09-19 08:44:16.827956

(9 rows)

Node.js

Connecting to your Database

Let's build a Node.js project that utilizes our Postgres database with help of the node-postgres package:

npm init -y

npm install pg

touch server.js

In server.js, put this (change the pg parameter according to your Postgres setup):

const pg = require('pg');

const pgURL = '192.168.2.111'

const pgPort = '5432'

const pgUser = 'postgres'

const pgPass = 'secretpassword'

const cs = 'postgresql://'+pgUser+':'+pgPass+'@'+pgURL+':'+pgPort+'/books';

const client = new pg.Client(cs);

client.connect();

const sql = 'SELECT * FROM the_expanse LIMIT $1';

const values = ['9'];

client.query(sql, values).then(res => {

const data = res.rows;

data.forEach(row => console.log(row));

}).finally(() => {

client.end()

});

And run the script in Node:

node server.js

{

book_id: 1,

title: 'Leviathan Wakes',

isbn: '978-0-316-12908-4',

year: 2011,

pages: 592,

created_on: 2018-05-03T00:42:05.608Z

}

{

book_id: 2,

title: 'Calibans War',

isbn: '978-1-841-49990-1',

year: 2012,

pages: 595,

created_on: 2019-01-21T00:42:05.608Z

}

{

book_id: 3,

title: 'Abaddons Gate',

isbn: '978-0-316-12907-7',

year: 2013,

pages: 539,

created_on: 2019-09-06T00:42:05.608Z

}

{

book_id: 4,

title: 'Cibola Burn',

isbn: '978-0-316-21762-0',

year: 2014,

pages: 583,

created_on: 2020-04-15T00:42:05.608Z

}

{

book_id: 5,

title: 'Nemesis Games',

isbn: '978-0-316-21758-3',

year: 2015,

pages: 544,

created_on: 2021-01-16T00:42:05.608Z

}

{

book_id: 6,

title: 'Babylons Ashes',

isbn: '978-0-316-33474-7',

year: 2016,

pages: 608,

created_on: 2021-04-04T00:42:05.608Z

}

{

book_id: 7,

title: 'Persepolis Rising',

isbn: '978-0-316-33283-5',

year: 2017,

pages: 560,

created_on: 2021-06-10T00:42:05.608Z

}

{

book_id: 9,

title: 'Tiamats Wrath',

isbn: '978-0-316-33286-6',

year: 2019,

pages: 544,

created_on: 2021-07-04T00:44:16.827Z

}

{

book_id: 10,

title: 'Leviathan Falls',

isbn: '978-0-356-51039-2',

year: 2021,

pages: 528,

created_on: 2021-09-19T00:44:16.827Z

}

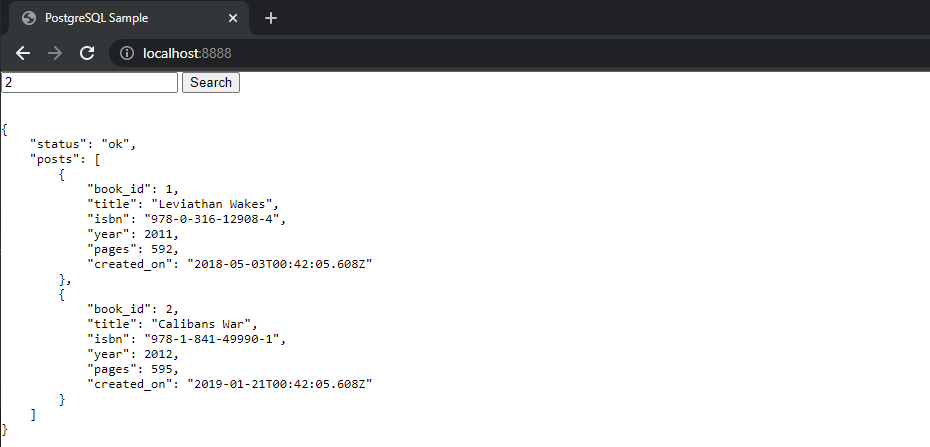

Adding a Frontend

npm install express

mkdir static

touch static/index.html server_express.js

In static/index.html put:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8" />

<meta name="viewport" content="width=device-width, initial-scale=1.0" />

<title>PostgreSQL Sample</title>

</head>

<body>

<input type="text" placeholder="Type 1 - 9" id="search" />

<button id="btn">Search</button>

<pre>

<code id="code"></code>

</pre>

<script>

const btn = document.getElementById("btn");

const code = document.getElementById("code");

const search = document.getElementById("search");

btn.addEventListener("click", async () => {

code.innerText = "loading";

const res = await fetch(

"/get?search=" + encodeURIComponent(search.value)

);

const json = await res.json();

code.innerText = "\n" + JSON.stringify(json, null, 4);

});

</script>

</body>

</html>

In server_express.js:

const express = require("express");

const { Pool } = require("pg");

const PORT = process.env.PORT || 8888;

const pgURL = '192.168.2.111'

const pgPort = '5432'

const pgUser = 'postgres'

const pgPass = 'secretpassword'

const pool = new Pool({

connectionString:

'postgresql://'+pgUser+':'+pgPass+'@'+pgURL+':'+pgPort+'/books',

});

async function init() {

const app = express();

app.get("/get", async (req, res) => {

const client = await pool.connect();

const [booksRes] = await Promise.all([

client.query(

// Never put user inputs directly into the SQL query

// `SELECT * FROM comments NATURAL LEFT JOIN rich_content WHERE board_id = ${req.query.search}`

// Always parametrize it so that the PG driver can clean it up to prevent SQL injections.

"SELECT * FROM the_expanse LIMIT $1",

[req.query.search]

)

]);

res

.json({

status: "ok",

posts: booksRes.rows,

})

.end();

await client.end();

});

app.use(express.static("./static"));

app.listen(PORT);

console.log(`running on http://localhost:${PORT}`);

}

init();

And run the app:

node server_express.js

running on http://localhost:8888

JSON Types

There are two JSON data types: json and jsonb. They accept almost identical sets of values as input. The major practical difference is one of efficiency. The json data type stores an exact copy of the input text, which processing functions must reparse on each execution; while jsonb data is stored in a decomposed binary format that makes it slightly slower to input due to added conversion overhead, but significantly faster to process, since no reparsing is needed. jsonb also supports indexing, which can be a significant advantage.

Because the json type stores an exact copy of the input text, it will preserve semantically-insignificant white space between tokens, as well as the order of keys within JSON objects. Also, if a JSON object within the value contains the same key more than once, all the key/value pairs are kept. (The processing functions consider the last value as the operative one.) By contrast, jsonb does not preserve white space, does not preserve the order of object keys, and does not keep duplicate object keys. If duplicate keys are specified in the input, only the last value is kept.

In general, most applications should prefer to store JSON data as jsonb, unless there are quite specialized needs, such as legacy assumptions about ordering of object keys.

Define a Column in a Table

CREATE TABLE the_expanse_tv (

episode_id INTEGER PRIMARY KEY GENERATED ALWAYS AS IDENTITY,

season_id INT NOT NULL,

content JSONB NOT NULL

);

Insert JSON Data

INSERT INTO the_expanse_tv

(season_id, content)

VALUES

(1, '{"title":"Dulcinea","imdb-rating":"7.7","air-date":"2015-11-23","abstract":"In the asteroid belt near Saturn, James Holden and the crew of the ice freighter Canterbury, on its way to Ceres Station, investigate a distress call from an unknown derelict ship, the Scopuli."}'),

(1, '{"title":"The Big Empty","imdb-rating":"7.7","air-date":"2015-12-15","abstract":"Holden and crew are trapped in a badly damaged shuttle. On Ceres, Miller uncovers clues about Julie Mao. On Earth, Chrisjen Avasarala questions a terrorist."}'),

(1, '{"title":"Remember the Cant","imdb-rating":"8.0","air-date":"2015-12-16","abstract":"While Avasarala plays a dangerous game of politics, Holden and his crew are forced to turn on one another while held captive by the Martian Navy."}'),

(1, '{"title":"CQB","imdb-rating":"8.7","air-date":"2015-12-16","abstract":"Holden and crew are caught in the middle of a desperate battle as mysterious war ships attack and board the Donnager. As Miller continues to investigate Julie Mao, his partner Havelock continues to go missing."}'),

(1, '{"title":"Back to the Butcher","imdb-rating":"7.8","air-date":"2016-01-05","abstract":"The crew has survived the loss of the Canterbury and the Donnager and are contacted by an unlikely ally. Miller, on Ceres, continues his investigation and his thoughts of a conspiracy grow."}'),

(1, '{"title":"Rock Bottom","imdb-rating":"8.0","air-date":"2016-01-12","abstract":"Holdens team makes an uneasy alliance with Fred Johnson while Miller fights for his life against Anderson Dawes thugs."}'),

(1, '{"title":"Windmills","imdb-rating":"7.9","air-date":"2016-01-19","abstract":"Holden and crew realize that they qre not alone on the Rocinante and find themselves up against a Martian Marine blockade. Millers dark night of the soul, believing all is lost, finds a new reason to keep going. Avasarala visits Holdens family in Montana."}'),

(1, '{"title":"Salvage","imdb-rating":"8.7","air-date":"2016-01-26","abstract":"A derelict vessel holds a potentially devastating secret. Holden and his crew cross paths with Miller on Eros. Avasarala receives bad news."}'),

(1, '{"title":"Critical Mass","imdb-rating":"8.7","air-date":"2016-02-02","abstract":"A flashback to Julies origin story reveals her trajectory. Holden and Miller finally meet and team up to get to the bottom of the strange emergency situation happening on Eros. As the true horror of the events on Eros is revealed, an ailing Holden and Miller must overcome incredible odds if they hope to live to fight another day. Part 1 of 2"}'),

(1, '{"title":"Leviathan Wakes","imdb-rating":"8.7","air-date":"2016-02-02","abstract":"A flashback to Julies origin story reveals her trajectory. Holden and Miller finally meet and team up to get to the bottom of the strange emergency situation happening on Eros. As the true horror of the events on Eros is revealed, an ailing Holden and Miller must overcome incredible odds if they hope to live to fight another day. Part 2 of 2"}');

select column_name, data_type from information_schema.columns where table_name = 'the_expanse_tv';

column_name | data_type

-------------+-----------

episode_id | integer

season_id | integer

content | jsonb

(3 rows)

Query Data

SELECT content->'title' AS title FROM the_expanse_tv;

title

-----------------------

"Dulcinea"

"The Big Empty"

"Remember the Cant"

"CQB"

"Back to the Butcher"

"Rock Bottom"

"Windmills"

"Salvage"

"Critical Mass"

"Leviathan Wakes"

(10 rows)