Tensorflow Deep Dream

- Prepare the Feature Extraction Model

- Prepare your Image

- Calculate Loss

- Gradient Ascent

- Main Loop

- Finetuning

DeepDream is an experiment that visualizes the patterns learned by a neural network. Similar to when a child watches clouds and tries to interpret random shapes, DeepDream over-interprets and enhances the patterns it sees in an image.

It does so by forwarding an image through the network, then calculating the gradient of the image with respect to the activations of a particular layer. The image is then modified to increase these activations, enhancing the patterns seen by the network, and resulting in a dream-like image.

The following code is taken from one of the official Tensorflow Tutorials:

Deep Dream Steps:

- Feed an image into a trained neural network

- Using different layers will result in different dream-like images. Deeper layers respond to higher-level features (such as eyes and faces), while earlier layers respond to simpler features (such as edges, shapes, and textures).

- Calculate the activations coming out from the layer of interest

- Calculate the gradient of the activations

- Add features to your image that will increase those activations

- Iterate and repeat

Prepare the Feature Extraction Model

The model with trained weights can be downloaded from Github and is available pre-trained based on different architectures. The base model can be directly downloaded using Keras:

# import base model

base_model = tf.keras.applications.InceptionV3(include_top=False, weights='imagenet')

The idea in DeepDream is to choose a layer (or layers) and maximize the "loss" in a way that the image increasingly "excites" the layers. The complexity of the features incorporated depends on layers chosen by you, i.e, lower layers produce strokes or simple patterns, while deeper layers give sophisticated features in images, or even whole objects.

The layers of interest are those where the convolutions are concatenated. There are 11 of these layers named mixed0 though mixed10. Deeper layers (those with a higher index) will take longer to train on since the gradient computation is deeper.

# Maximize the activations of these layers

names = ['mixed3', 'mixed5']

layers = [base_model.get_layer(name).output for name in names]

# Create the feature extraction model

dream_model = tf.keras.Model(inputs=base_model.input, outputs=layers)

Prepare your Image

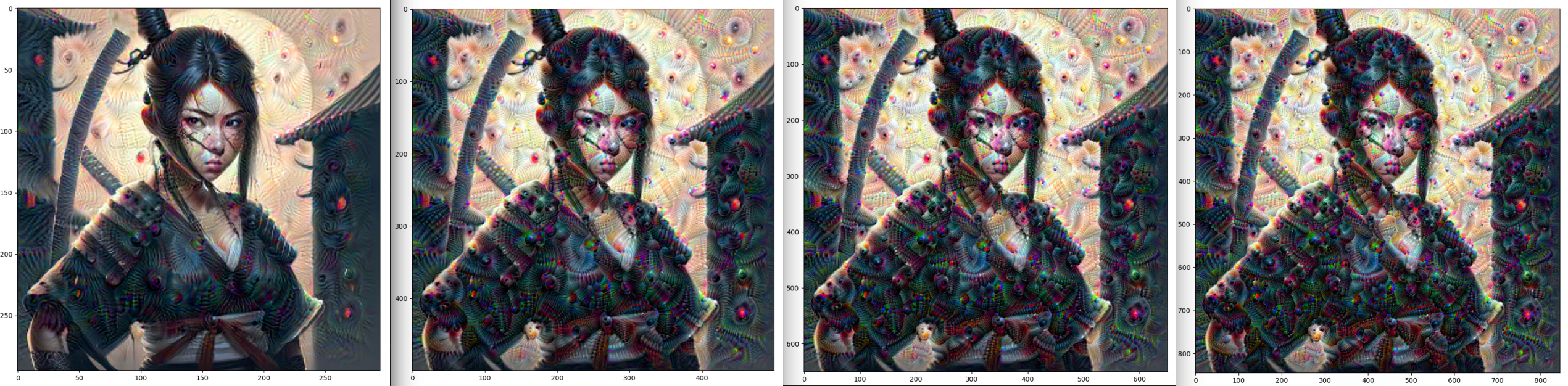

url = 'https://img.freepik.com/premium-photo/cartoon-ninja-girl-beautiful-japanese-ninja-girl-concept-art-digital-painting-fantasy-illustration_743201-2848.jpg'

# Download an image and read it into a NumPy array.

def download(url, max_dim=None):

name = url.split('/')[-1]

image_path = tf.keras.utils.get_file(name, origin=url)

img = Image.open(image_path)

if max_dim:

img.thumbnail((max_dim, max_dim))

return np.array(img)

# Normalize an image

def deprocess(img):

img = 255*(img + 1.0)/2.0

return tf.cast(img, tf.uint8)

# Downsizing the image makes it easier to work with.

original_img = download(url, max_dim=500)

Calculate Loss

The loss is the sum of the activations in the chosen layers. The loss is normalized at each layer so the contribution from larger layers does not outweigh smaller layers. Normally, loss is a quantity you wish to minimize via gradient descent. In DeepDream, you will maximize this loss via gradient ascent.

def calc_loss(img, model):

# Pass forward the image through the model to retrieve the activations.

# Converts the image into a batch of size 1.

img_batch = tf.expand_dims(img, axis=0) # Convert into batch format

layer_activations = model(img_batch) # Run the model

if len(layer_activations) == 1:

layer_activations = [layer_activations]

losses = [] # accumulator to hold all the losses

# loop over activations and append losses to array

for act in layer_activations:

# calculate mean of each activation

loss = tf.math.reduce_mean(act)

# append loss for each activation

losses.append(loss)

# calculate sum

return tf.reduce_sum(losses)

Gradient Ascent

Once you have calculated the loss for the chosen layers, all that is left is to calculate the gradients with respect to the image, and add them to the original image. Adding the gradients to the image enhances the patterns seen by the network.

class DeepDream(tf.Module):

def __init__(self, model):

self.model = model

@tf.function(

input_signature=(

tf.TensorSpec(shape=[None,None,3], dtype=tf.float32),

tf.TensorSpec(shape=[], dtype=tf.int32),

tf.TensorSpec(shape=[], dtype=tf.float32),)

)

def __call__(self, img, steps, step_size):

print("Tracing")

loss = tf.constant(0.0)

for n in tf.range(steps):

with tf.GradientTape() as tape:

# This needs gradients relative to `img`

# `GradientTape` only watches `tf.Variable`s by default

tape.watch(img)

loss = calc_loss(img, self.model)

# Calculate the gradient of the loss with respect to the pixels of the input image.

gradients = tape.gradient(loss, img)

# Normalize the gradients.

gradients /= tf.math.reduce_std(gradients) + 1e-8

# In gradient ascent, the "loss" is maximized so that the input image increasingly "excites" the layers.

# You can update the image by directly adding the gradients (because they're the same shape!)

img = img + gradients*step_size

img = tf.clip_by_value(img, -1, 1)

return loss, img

deepdream = DeepDream(dream_model)

Main Loop

def run_deep_dream_simple(img, steps=100, step_size=0.01):

# Convert from uint8 to the range expected by the model.

img = tf.keras.applications.inception_v3.preprocess_input(img)

img = tf.convert_to_tensor(img)

step_size = tf.convert_to_tensor(step_size)

steps_remaining = steps

step = 0

while steps_remaining:

if steps_remaining>100:

run_steps = tf.constant(100)

else:

run_steps = tf.constant(steps_remaining)

steps_remaining -= run_steps

step += run_steps

loss, img = deepdream(img, run_steps, tf.constant(step_size))

print ("Step {}, loss {}".format(step, loss))

result = deprocess(img)

plt.figure(figsize=(12,12))

plt.imshow(result)

plt.show()

return result

dream_img = run_deep_dream_simple(img=original_img,

steps=100, step_size=0.01)

Step 100, loss 2.1992263793945312

Finetuning

Octaves

Pretty good, but the output is noisy. One approach that addresses all these problems is applying gradient ascent at different scales. This will allow patterns generated at smaller scales to be incorporated into patterns at higher scales and filled in with additional detail. To do this you can perform the previous gradient ascent approach, then increase the size of the image (which is referred to as an octave), and repeat this process for multiple octaves.

start = time.time()

OCTAVE_SCALE = 1.30

img = tf.constant(np.array(original_img))

base_shape = tf.shape(img)[:-1]

float_base_shape = tf.cast(base_shape, tf.float32)

for n in range(-2, 3):

new_shape = tf.cast(float_base_shape*(OCTAVE_SCALE**n), tf.int32)

img = tf.image.resize(img, new_shape).numpy()

img = run_deep_dream_simple(img=img, steps=50, step_size=0.01)

display.clear_output(wait=True)

img = tf.image.resize(img, base_shape)

img = tf.image.convert_image_dtype(img/255.0, dtype=tf.uint8)

show(img)

end = time.time()

end-start

Step 50, loss 1.9624563455581665Step 50, loss 2.1029279232025146Step 50, loss 2.0884666442871094Step 50, loss 2.07767915725708Step 50, loss 2.0698883533477783

Scaling up with Tiles

One thing to consider is that as the image increases in size, so will the time and memory necessary to perform the gradient calculation. The above octave implementation will not work on very large images, or many octaves. To avoid this issue you can split the image into tiles and compute the gradient for each tile.

Applying random shifts to the image before each tiled computation prevents tile seams from appearing:

def random_roll(img, maxroll):

# Randomly shift the image to avoid tiled boundaries.

shift = tf.random.uniform(shape=[2], minval=-maxroll, maxval=maxroll, dtype=tf.int32)

img_rolled = tf.roll(img, shift=shift, axis=[0,1])

return shift, img_rolled

shift, img_rolled = random_roll(np.array(original_img), 512)

show(img_rolled)

And implement the random shifts into the deepdream function defined earlier:

class TiledGradients(tf.Module):

def __init__(self, model):

self.model = model

@tf.function(

input_signature=(

tf.TensorSpec(shape=[None,None,3], dtype=tf.float32),

tf.TensorSpec(shape=[2], dtype=tf.int32),

tf.TensorSpec(shape=[], dtype=tf.int32),)

)

def __call__(self, img, img_size, tile_size=512):

shift, img_rolled = random_roll(img, tile_size)

# Initialize the image gradients to zero.

gradients = tf.zeros_like(img_rolled)

# Skip the last tile, unless there's only one tile.

xs = tf.range(0, img_size[1], tile_size)[:-1]

if not tf.cast(len(xs), bool):

xs = tf.constant([0])

ys = tf.range(0, img_size[0], tile_size)[:-1]

if not tf.cast(len(ys), bool):

ys = tf.constant([0])

for x in xs:

for y in ys:

# Calculate the gradients for this tile.

with tf.GradientTape() as tape:

# This needs gradients relative to `img_rolled`.

# `GradientTape` only watches `tf.Variable`s by default.

tape.watch(img_rolled)

# Extract a tile out of the image.

img_tile = img_rolled[y:y+tile_size, x:x+tile_size]

loss = calc_loss(img_tile, self.model)

# Update the image gradients for this tile.

gradients = gradients + tape.gradient(loss, img_rolled)

# Undo the random shift applied to the image and its gradients.

gradients = tf.roll(gradients, shift=-shift, axis=[0,1])

# Normalize the gradients.

gradients /= tf.math.reduce_std(gradients) + 1e-8

return gradients